SoftmaxCrossEntropyWithLogits

tensorflow C++ API

tensorflow::ops::SoftmaxCrossEntropyWithLogits

Computes softmax cross entropy cost and gradients to backpropagate.

Summary

Inputs are the logits, not probabilities.

Arguments:

- scope: A Scope object

- features: batch_size x num_classes matrix

- labels: batch_size x num_classes matrix The caller must ensure that each batch of labels represents a valid probability distribution.

Returns:

Outputloss: Per example loss (batch_size vector).Outputbackprop: backpropagated gradients (batch_size x num_classes matrix).

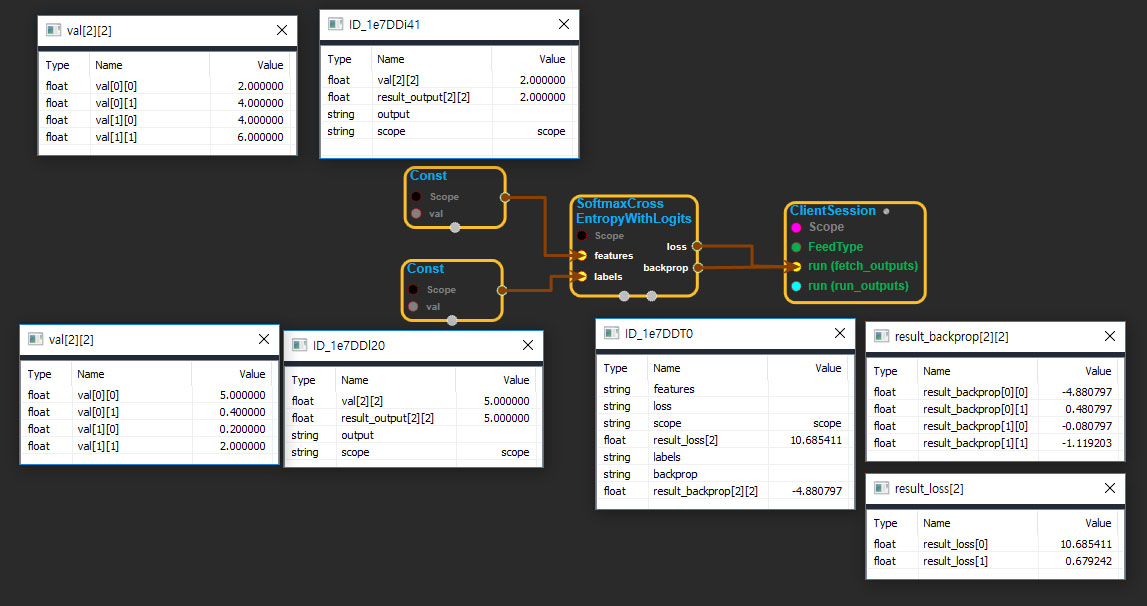

SoftmaxCrossEntropyWithLogits block

Source link : https://github.com/EXPNUNI/enuSpaceTensorflow/blob/master/enuSpaceTensorflow/tf_nn.cpp

Argument:

- Scope scope : A Scope object (A scope is generated automatically each page. A scope is not connected.)

- Input features: connect Input node.

- Input labels: connect Input node.

Return:

- Output loss: Output object of SoftmaxCrossEntropyWithLogits class object.

- Output backprop: Output object of SoftmaxCrossEntropyWithLogits class object.

Result:

- std::vector(Tensor) result_loss : Returned object of executed result by calling session.

- std::vector(Tensor) result_backprop : Returned object of executed result by calling session.

Using Method